The Word They Cut

A series on reading the clinical AI literature carefully.

Close read of Brodeur et al. (Science 2026), the latest "AI matches doctors" headline. The 78/80 R-IDEA score reads more as a contamination signal than a capability claim. The kappa they did report is moderate. The kappa they did not report is the one that matters. Comparator prompts were not matched. The "better at triage" framing rests on a different experiment than the one that actually tested triage. The BIDMC comparison pools the weaker physician with the better one. Eight methodological problems below, plus what the paper would need to do to clear a deployment-grade review.

What this series is

There is a pattern in clinical AI publishing that has stopped being a coincidence. A frontier model gets benchmarked against physicians on cases that are almost certainly in its training data, scored on rubrics that reward its house style, and the result hits the wire as "AI matches doctors" before anyone has looked at the kappa. Press release moves faster than the supplementary materials, and the supplementary materials are where the paper actually lives.

This one is a case study. Science went live April 30, 2026. Same day, one of the senior authors had already dropped media coverage links (Guardian, Telegraph, Stat News) into the comments of their own LinkedIn announcement. Same day. That kind of timing makes me question intent. Was this work done to evaluate LLMs in medicine, or to generate splashy headlines and a name at the expense of the colleagues whose practice the paper claims to outperform? Let us investigate.

A fun aside before we go further. I have close friends who took part in labeling for studies in this family. One told me they forgot the assignment entirely, woke up an hour ahead of the submission deadline, and rushed to finish because they wanted the monetary incentive 😅. That is the actual gap between practice and whatever these studies are measuring.

Speaking of practice. I read a lot of this literature for my day job. I have never used a single piece of Clinical AI literature to design my experiments. ML literature, statistics literature, LLM literature, yes. Clinical AI literature, no. So I beg of you, reader: if you are implementing AI at a hospital, or you are a new hire at a Clinical AI company and you have no clue what you are doing, talk to biomedical PhD research scientists and statisticians to help you with your design. Do not follow whatever this is.

The bar I am holding the literature to in this series is "would the result survive a reasonably aggressive deployment review at a clinical AI company." I can't believe I'm saying this, but that bar now seems higher than peer review in our most prestigious journals, and certainly higher than press coverage. If the paper does not clear it, I want to know exactly which load-bearing assumption breaks.

Today's paper: Brodeur et al., Performance of a large language model on the reasoning tasks of a physician, Science, April 2026.

The word they cut

Before reading anything else, look at the arXiv preprint title and the Science title side by side.

arXiv: Superhuman performance of a large language model on the reasoning tasks of a physician.

Science: Performance of a large language model on the reasoning tasks of a physician.

Reviewers cut "superhuman." That edit is the most informative thing the peer review process produced. The people closest to the work, with the most domain context, would not put their imprimatur on the headline the authors wanted. Some publications would have softened "superhuman" by sliding it into the abstract or the discussion. Science removed it from the title. That means something and I give credit where it is due.

And then the authors recovered the equivalent splash through the press anyway. The same-day Guardian headline reads "AI outperforms doctors in Harvard trial of emergency triage diagnoses," which is functionally the rhetorical effect the cut word was reaching for. Whatever editorial restraint the journal exercised at the title bar got routed around the moment the press tour kicked off.

The Hopkins and Cornelisse Perspective that runs alongside the paper hedges in the discussion register. It politely points out that accuracy on a defined task is one dimension of clinical readiness, that equity, cost, safety, and monitoring are others, and that the AI is not ready.

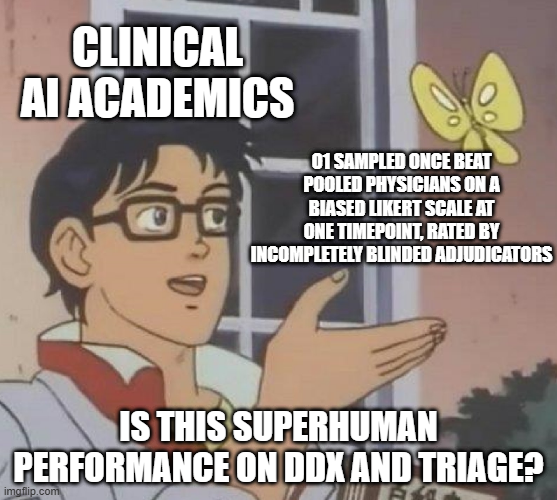

I belabor this because the LinkedIn coverage of the paper, including from co-authors, is rehabilitating the cut word as if it never got cut. The press framing is "outperformed physicians" with a halo effect that pulls "superhuman" along behind it, sometimes literally. The reviewers cut it. Honor that.

What the paper actually shows

Five vignette experiments inherited from prior ARISE network publications. One new real-world study at Beth Israel Deaconess.

The vignette experiments use NEJM CPCs and NEJM Healer cases as inputs. The model is o1-preview (at times incorrectly compared to 4o), released September 2024 with an October 2023 pretraining cutoff. The human comparators are historical, drawn from the prior ARISE papers, recruited 2022 through 2024 under different study conditions. Headline result from the vignette work is a 78/80 perfect score on the R-IDEA rubric for clinical reasoning quality.

The BIDMC study is the new contribution. 76 cases, two attending physicians as the human comparator, two more attendings as blinded adjudicators, all at one academic medical center. Unstructured EHR text at three timepoints (initial ED triage, end of ED encounter, admission to medicine or ICU) was fed into o1, GPT-4o, and the human attendings, and the resulting differentials were Bond-scored. Proportion of differentials rated 4 or 5: 67.1 / 72.4 / 81.6 percent for o1 versus 55.3 / 61.8 / 78.9 percent for the better of the two human attendings. (One additional o1 touchpoint was unanswered; the authors imputed it as a Bond score of 0, which slightly lowers o1's reported score and goes against the paper's narrative. Worth flagging for even-handedness.)

The headline pulled into the press is "o1 outperforms physicians on differential diagnosis and is most decisively better at initial ED triage." Read more carefully, the paper supports something narrower. We will get there.

Problem one: contamination

NEJM CPCs are publicly available, indexed, archived, scraped, and almost certainly in the training corpus of every frontier model released in the last two years. NEJM Healer cases are on Aquifer's platform, also accessible. The authors flag contamination as a limitation and run a pre/post-cutoff sensitivity analysis as their probe (79.8 percent accuracy on 109 cases pre-October-2023, 73.5 percent on 34 cases post, p=0.59).

"But Karl, they did a sensitivity analysis." Yeah, n=34 with p=0.59. That is not power, that is hope. Pre/post cutoff is a weak contamination check on its face. Training data leaks past explicit cutoffs through derivative content, post-cutoff incorporations, and indirect citation. n=34 post-cutoff is severely underpowered to detect a meaningful contamination effect. The 6.3-point accuracy difference produces p=0.59 because the comparison cannot resolve the signal it is trying to detect. There is no held-out vignette set verifiably outside the training corpus, and no per-case provenance check.

I am honestly not sure why the authors did not do this. It feels exceptionally lazy. Maybe they wanted the weight of the NEJM brand behind the cases. At that rate, just hire the folks who write those cases to construct a true held-out set. This is basic ML. You could still note in the paper that the case-writers are the same people who write the NEJM originals, and the credibility carries.

A 78/80 perfect R-IDEA score should make you suspicious before it makes you impressed. Frontier models do not produce that kind of saturation on genuine out-of-distribution diagnostic reasoning. The empirical reference points are not subtle.

OpenAI's own HealthBench, designed by 262 physicians across 60 countries with 48,562 rubric criteria, puts frontier models at 46.2 percent on its hard slice as of GPT-5. MedHELM across 35 medical benchmarks shows DeepSeek R1 and o3-mini, the two strongest reasoning models, at 66 and 64 percent win rates. LiveMedBench, a contamination-free medical benchmark from 2026, reports the best model at 39.2 percent and finds 84 percent of evaluated models degrade on post-cutoff cases. An NEJM AI script concordance study put o3 at 67.8 percent and explicitly noted that LLMs perform "markedly lower on SCT than their typical achievement on medical multiple-choice benchmarks."

So frontier models on rigorous, contamination-controlled medical benchmarks live in the 40 to 70 percent range. 97.5 percent on a public benchmark is roughly twice the headroom of the most rigorous physician-graded eval available. That is a red flag, not a capability signal.

There is also a theoretical floor. Two independent formal results, Xu and colleagues' impossibility proof and the Kalai-Vempala calibrated-must-hallucinate theorem, show that hallucination is mathematically unavoidable for any calibrated LLM trained on finite data, with a lower bound on the hallucination rate that scales with the fraction of facts appearing only once in training. A model genuinely scoring 97.5 percent on a long-tailed distribution of diagnostic reasoning is either violating these bounds or performing recognition rather than reasoning. Probably the latter.

NEJM CPCs are near-worst-case contamination targets. Decades-long open archive, indexed across PubMed and dozens of medical-education sites, each case published with its canonical answer adjacent in the same article. Any plausibly large web-scraped corpus contains them. Healer cases are paywalled but appear in published clinical-reasoning education literature. Membership-inference attacks against frontier models "hardly surpass 0.6 ROC-AUC" in the contamination-detection literature, which means the standard detectors are not strong enough to clear o1-preview of having seen these cases.

Frontier models produce 97.5 percent saturation on tasks they have seen the answers to. "We acknowledge but did not address" is not a defense.

This matters because the vignette experiments are doing rhetorical work the BIDMC study cannot do alone. The BIDMC study has n=76 and two human comparators. The vignette experiments are what makes the headline generalizable. If the vignette work is contaminated, the generalization is unsupported.

Problem two: the rubrics reward o1's house style

The Bond scale and R-IDEA were designed for human reasoning artifacts. They reward structure, completeness, and well-organized exposition. These are exactly the features o1 produces by default and exactly the features a physician working under time pressure produces less of.

The adjudicators were blinded to source. Blinding to source does not give you blinding to style. If AI outputs are systematically longer, more structured, and more enumerated than human outputs, a rubric that rewards length and structure will score them higher even if the underlying clinical reasoning is identical or worse. The fix is length-matching the AI output to physician-typical length before adjudication. The paper does not do this.

This is a known confound. R-IDEA has shown rater drift toward verbose and well-structured artifacts in prior validation work. The right response is to demonstrate it does not apply here. Assuming it does not apply is not a response. And as Problem Three will show, the prompt design actively amplified the confound by handing the comparator a prompt strategy that suppressed the very style features the rubric rewards.

The Verbosity Confound

Same case, same diagnoses. Move the length cap. Watch the panels visually converge at short caps and diverge at long ones, while the Bond rubric rewards length and structure rather than reasoning quality.

- Community-acquired pneumonia

- Pulmonary embolism

- Acute pericarditis

- Costochondritis

- Viral pleurisy

- CAP

- PE

- Pericarditis

- Costo

- Pleurisy

What the BIDMC source-attribution task used (CPC differentials were uncapped). Both panels look nearly identical at this cap.

Problem three: the prompts were not matched

The supplement contains a finding the main text does not surface: the prompts given to o1 and to its comparators were not matched. Two asymmetries, both undocumented in the headline framing.

The CPC asymmetry. The GPT-4 prompt for the CPC vignette experiments contains the instruction "You do not need to explain your reasoning, just give the diagnosis/diagnoses." That instruction appears twice in the prompt. The o1-preview prompt for the same experiments does not contain it. o1 was free to reason at length. GPT-4 was instructed to suppress reasoning.

Chain-of-thought prompting on GPT-4 was the original test-time compute lever before reasoning models existed. Telling GPT-4 to skip its reasoning leaves capability on the table, and the comparator is the very system supposed to exercise it. The Figure 2B comparison (o1 88.6 percent vs GPT-4 72.9 percent, p=0.015) is therefore "reasoning model with reasoning enabled by default" against "non-reasoning model with reasoning suppressed by prompt instruction."

The architectural difference between o1 and GPT-4 probably accounts for most of the 15.7-point gap. Probably most is not all. A properly conducted comparison would have given GPT-4 either default prompting or CoT-encouraged prompting and reported both. The paper does neither. The proportion of the gap that is architecture vs suppressed CoT is unrecoverable from the data as published.

This adds a second mechanism to the rubric-rewards-house-style argument. The rubric rewards o1's natural style. The prompt design actively handicapped the comparator on the dimension the rubric rewards.

The BIDMC asymmetry is harder to call directionally. The o1 prompt at the initial-triage timepoint is two sentences. It tells o1 it is assisting an ED doctor, asks for a prioritized differential, and notes that the input represents what was known at triage. That is the entire prompt.

The GPT-4o prompt for the same task is approximately four pages. It explicitly instructs the model to use a Tree of Thought reasoning approach, follow a five-phase reasoning structure (Data Restatement, Initial Diagnostic Considerations, Refinement and Prioritization, Cannot Miss Diagnoses, Final Output Construction), output in a specified format, and includes a fictitious example output.

Whether the GPT-4o scaffolding helped or hurt by overconstraining the format is genuinely ambiguous (could have done both, depending on the case). The defensible critique is narrower: o1 and GPT-4o were given materially different prompt strategies, which makes the model-vs-model comparison non-clean regardless of which way the asymmetry cuts. The headline "o1 outperformed GPT-4o" inherits a meaningful asterisk on those grounds alone.

The deeper pattern is that the paper is comparing model architectures while not holding prompt strategy constant. Architectural and prompt-strategy differences are confounded in every reported gap. The paper presents single numbers absorbing both.

There is a third layer of asymmetry the supplement is silent about. Methods document the o1 and GPT-4o prompts in full. They do not document the instructions given to the two human physician comparators. We are told only that "the same data were provided" and that "each physician generated their own second opinion differential." Whether the humans were given a "second opinion" framing, a cannot-miss flag instruction, format constraints, reference materials, or time limits is not in the paper or supplement. This is a transparency gap. The blinding procedure controls for output format differences across sources after the fact. It does not control for differences in generation conditions, and the paper provides no way to evaluate whether the humans were operating under task definitions matched to the LLM conditions.

Prompt Asymmetry

The model architectures differ. So do the prompts. Switch between experiments to see how.

NEJM CPC vignettes. The two prompts are nearly identical except GPT-4's contains the instruction "You do not need to explain your reasoning", twice. o1-preview's does not. Chain-of-thought is suppressed on the comparator and free on the model.

Problem four: the indistinguishability shell game

Here is where the LinkedIn coverage is doing its most aggressive sleight of hand. The "raters could not tell AI from human differentials" framing is being used as a Turing-test pass. It is not one. The framing also belongs to a specific experiment: the BIDMC source-attribution task, which used differentials capped at five items per the Figure 5 caption. The CPC differentials were not capped, and source attribution was not run on them. The aleatoric/epistemic argument below scopes to the BIDMC rating task it was originally constructed against.

The numbers. Rater one answered "can't tell" on 83.6 percent of the 911 ratings, correctly attributed source in 15.2 percent, and was wrong on 1.2 percent. Rater two answered "can't tell" on 94.4 percent, correctly attributed in 3.1 percent, and was wrong on 2.5 percent. Rater two's correct attributions barely exceed their incorrect attributions on the small subset they did not punt on. The raw spread between raters on "can't tell" is 10.8 percentage points. Rater two also failed to record any guess at all on 79 of 912 cases (8.7 percent), which is itself a behavioral signal about how the rating task was being engaged. Nearly one in eleven cases skipped outright by one rater, separate from the punting visible in the "can't tell" rate.

The paper reports kappa = 0.51 for the Bond quality scoring task (54 percent raw agreement on 911 ratings). The main text just reports raw percentages for the source-attribution task, with the supplementary table referenced for that breakdown. The methods section says a linear-weighted Cohen's kappa was calculated for source-attribution. Linear-weighted kappa is for ordinal data. AI / Human / Can't tell are nominal categories. So either the methods description is loose and they actually used unweighted kappa, or they used a weighted statistic on nominal data, which would be a mismatch to the task. Either way the appropriate statistic for an imbalanced nominal rating task with one category dominating at 94 percent is Cohen's kappa with prevalence and bias adjustment (PABAK) or Gwet's AC1. Neither is reported.

Two things to flag.

First, the kappa they did report is moderate at best by Landis and Koch conventions, and that kappa is on the rubric driving the headline accuracy claim. A Science paper claiming AI exceeds physicians on a Bond-scored task should produce kappa above 0.6 at minimum, ideally above 0.7. 0.51 with 54 percent raw agreement on the underlying ratings is a softer foundation than the headline implies.

Second, the statistic that matters for the indistinguishability claim is not in the main text. When one rater answers "can't tell" 94 percent of the time, raw agreement is dominated by base rates. PABAK is exactly the statistic that surfaces this kind of pattern. Burying it in the supplement (or, worse, computing a statistic appropriate for ordinal data on nominal data and not reporting the result) while the main text leans on raw percentages is a choice. The 10.8-point spread between raters and the 8.7 percent missing-data rate are the symptoms that should have moved that calculation forward.

There is a deeper methodological problem the kappa discussion only gestures at. The rating task has two distinct sources of uncertainty about source. Aleatoric uncertainty about source is the irreducible kind: the differentials are genuinely indistinguishable, no rater could tell, and "can't tell" is an honest answer. Epistemic uncertainty about source is recoverable: a rater could tell with effort, but the protocol does not ask them to, or makes "can't tell" the path of least resistance, or fails to compensate for the stylistic giveaways that mark o1 outputs as o1 outputs.

The Brodeur protocol gave raters three options (AI / Human / Can't tell) with no incentive structure favoring effortful attribution. It asked whether raters discriminate by default. The question that supports the indistinguishability claim is whether raters are able to discriminate. A 94 percent "can't tell" rate is consistent with "these outputs are aleatorically indistinguishable" and with "raters defaulted because the task did not push them off the default." The data does not let us tell which (anyone who wrangles clinical labelers for a living like myself knows this).

This matters because the headline accuracy claim depends on Bond-scoring being blind to source in the first sense and not the second. If raters can tell AI from human, even tacitly, and AI outputs share recoverable stylistic features that the Bond rubric independently rewards (length, structure, enumeration), then the "blind" Bond scores are not blind. They are style scores with rater awareness of source feeding through. Blinding to source is a methodological floor for the entire study. If the floor is soft, every accuracy claim built on top of it inherits the softness.

The deeper issue is that even a clean indistinguishability finding would not support the second-opinion product narrative the paper is being marketed as supporting. The value of an AI second opinion is precisely that it has different failure modes than the human first opinion. Indistinguishability is anti-correlated with that value. If the AI's differential is identical in every recoverable way to the attending's differential, you have built an expensive copy. The cheaper move is calling another physician.

Cohen’s κ vs Raw Agreement

Two raters, three response categories. Pick a task: source attribution (AI / Human / Can’t tell) or Bond quality scoring (Low / Mid / High, bucketed). Move the sliders. Watch raw agreement climb past 90 percent while κ collapses toward zero.

What the paper actually reports for source attribution: ~84% / ~94% "can't tell." κ was not computed in the main text.

Problem five: variance asymmetry

In the management reasoning experiment using Grey Matters cases, o1 was sampled three times per case. GPT-4 was sampled five times per case. Physicians provided 178 to 197 responses across the five cases. Headline is that o1's median score (89 percent, IQR 79-91) exceeded GPT-4 and physicians.

Three samples gives you a variance estimate. It does not give you a stable median against a distribution of nearly two hundred physician responses. The comparison is asymmetric in the direction the headline depends on. The 95 percent confidence interval on a three-sample estimate is wide. "Exceeds physicians" assumes those three o1 draws are a reasonable estimate of central tendency. The experiment as run cannot rule out that they are not.

The Landmark Diagnostic Cases experiment is the cleaner version of the critique. Each o1-preview response was generated once per case according to the Science methods. There the comparison is a single observation against a distribution. With n=1, the variance is undefined and the 95 percent confidence interval is unbounded. "Exceeds physicians" with n=1 is statistical nonsense.

The fix in both cases is to sample o1 the same number of times as the comparator and report the resulting confidence interval. This is not a hard fix. It costs API credits. The paper did not do it.

Variance Asymmetry

The same data, two framings. As reported, o1 wins. With uncertainty visible, the picture changes, sharply for the n=1 experiment, more subtly for the n=3 experiment.

Management reasoning. o1 sampled three times per case in the Science version. Asymmetry is real but not catastrophic.

Problem six: the triage framing slippage

The press and LinkedIn coverage describe the paper's strongest finding as o1 outperforming physicians at "initial ED triage." This is borrowing the publicity halo of the BIDMC study to describe a finding that is actually about something else.

The BIDMC study is a differential diagnosis quality study. The model and the human attendings are given unstructured EHR text at three timepoints, they produce differentials, and the differentials get Bond-scored. One of the timepoints is labeled "ED triage." Producing a high-quality differential when handed a triage note is a different task from triaging a patient. Triage is acuity assignment. Triage is disposition. Triage is deciding who needs to be seen now and who can wait and who needs immediate intervention. The paper does not test any of these.

There is one regime where DDx quality and triage decisions converge mechanically: when the top differential is itself an obviously emergent diagnosis that maps directly to immediate intervention. STEMI on a troponin and a chest-pain story. Hemorrhagic stroke on imaging. Anaphylaxis with stridor. Septic shock with lactate of nine. In those cases, naming the diagnosis is naming the disposition, and "high-quality differential" is functionally equivalent to "right triage call." But those are also exactly the cases where the bottom 80 percent of pattern matching already works. Any reasonably attentive medical student gets there. So does Boolean logic from 1972.

The triage decisions that actually require judgment are the ones where the top differential is uncertain or atypical. The 80 year old with vague abdominal pain. The well-appearing patient whose pretest probability for aortic dissection is not obvious from the chart. The patient whose presentation hides the dangerous diagnosis behind two more probable benign ones. At these edges the right triage is "work it up before you commit to the diagnosis you cannot rule out yet" or "admit and observe." DDx quality on text inputs does not transfer to triage quality at this layer because at this layer triage is a decision under irreducible uncertainty about which diagnosis you are still allowed to defer.

To be clear: the Brodeur paper does contain one experiment that tests triage decisions in an operational sense. The cannot-miss-diagnosis inclusion experiment at initial triage presentation. It compares whether o1, GPT-4, attendings, and residents include the dangerous diagnoses you must not miss. The result there is "ns." Not significant. Across all four sources.

So the only experiment in the paper that actually tested triage decisions returned a null result, and the headline framing is about a different experiment that tested something else under a label that contains the word "triage." The paper's own Discussion section concedes the point: "Decisions in the emergency department are often centered around triage, disposition, and immediate management and not diagnostic accuracy." That sentence convicts the headline.

One more layer of slippage. The human physicians in the BIDMC study were not the clinicians who saw these patients. They were two co-authors at BIDMC who were given the same retrospective EHR text the AI received and asked to generate differentials in February 2025. The human comparison condition was unhurried, focused, and academic. Real ED triage involves time pressure, interruption, parallel workflows, and fatigue, none of which the human comparators faced. If anything, the conditions favored the humans. The "second opinion" framing is being applied in a research environment that does not actually correspond to the deployment environment a real second-opinion product would face.

One further note on the BIDMC comparison. The reported statistical test is a mixed-effects logistic regression that pools Physician 1 and Physician 2 into a single "Physicians" condition before comparing against o1, with case ID as a random effect. Physician 2 was substantially weaker (50.0 / 52.6 / 69.7 percent Bond 4-5) than Physician 1 (55.3 / 61.8 / 78.9). Pooling drags the physician baseline down. The headline "o1 outperformed physicians" is doing arithmetic against the pool, and most readers will assume the comparison was against the better physician.

The only solid claim from the BIDMC study imho is: on Bond-scored differential diagnosis quality, given the unstructured EHR context available at three timepoints in 76 BIDMC cases, o1 produced differentials that two incompletely-blinded attendings rated 4 or 5 more often than the differentials produced by two other BIDMC attendings, with the comparison run against a pool that drags the human baseline below either physician's individual performance. lol cool? Another benchmark claim in the big 2026.

The Bond Trajectory

Three timepoints in the BIDMC encounter. Both lines rise. The gap shrinks. Watch where the difference lives.

What most readers assume the headline means.

Both received identical text at each timepoint. The asymmetry is in generation conditions, not information access. The AI processes whatever is on the page in research-task mode at full attention. The human comparators were two BIDMC co-authors reading retrospective EHR text in February 2025, also in research-task mode, focused and unhurried. Real ED triage looks like neither.

Problem seven: out of distribution

Here I want to be careful. I am honestly agnostic on the claim that LLMs can outdiagnose clinicians on most cases. The bottom 80 percent of medicine is pattern matching, and frontier models are very good at pattern matching. If a 58 year old with substernal chest pain, diaphoresis, and a troponin of 4.2 walks into the ED, you do not need a frontier reasoning model to put STEMI at the top of the differential. A reasonably attentive medical student gets there. So does a Boolean expression from 1972.

The cases where a second opinion actually matters are the cases where the diagnosis is not obvious. Atypical presentations. Rare diseases. Demographic mismatches between patient and training corpus. Drug interactions outside the literature. The 80 year old woman with vague abdominal pain who is having an MI because she is 80 and a woman and atypical presentation is the typical presentation. Behçet's in someone whose surname appears in the training corpus four times. Endocarditis in an IV drug user where the model goes evasive on its top diagnosis because the demographic distribution of the training data does not match the demographic distribution of the patient.

The pattern that should worry us is what happens to these models when they leave distribution.

Mirzadeh and colleagues published GSM-Symbolic in late 2024. They took grade-school math word problems and added a single irrelevant clause ("five of the kiwis on Saturday were smaller than average," a sentence with no bearing on the arithmetic), and watched accuracy on GPT-4o drop by up to 32 points. Smaller models lost up to 65 points. They also renamed the numbers in the same problem and got measurable accuracy variance with no semantic change. Polite way of saying the models are not actually doing the arithmetic, they are pattern-matching to surface features of the problem.

The Apple "Illusion of Thinking" paper that followed showed reasoning models including o1 failing on Tower of Hanoi past about eight disks, which got vigorously rebutted by Lawsen for output-token truncation issues, which got re-rebutted by an independent CSIC replication that controlled for the truncation and still found degradation. The truth is somewhere in the middle. The point is that model behavior on slightly out-of-distribution puzzles is fragile in ways that contemporary evaluation methodology systematically misses.

In the medical domain specifically, van Kessel and colleagues published a study earlier this year showing that GPT-4.1 hallucinated UK clinical guidelines for type 2 diabetes management at roughly ten times the rate it hallucinated location-agnostic guidelines (adjusted prevalence ratio 10.03, 95 percent HDI 4.90 to 21.61). Accurate UK hypercholesterolaemia guideline citation dropped to 30 percent of the location-agnostic baseline. Same medical content, swap the location string in the prompt, get a meaningfully different distribution of confidently wrong outputs. DeepSeek-V3 was worse, with UK accurate citation collapsing to essentially zero. The mechanism is training-data representation. (Reasoning models were not tested in that paper and I would like to see the replication.)

If your evaluation methodology is NEJM CPCs and NEJM Healer cases, what you are measuring is the part of medicine that already works pretty well. Cookie cutter medicine is genuinely fine. It does not need a Science paper. You can cover most of it with UpToDate and an alert PA. The question that determines whether deploying these systems improves or harms patient care is what they do at the edges, which Brodeur et al. do not test.

The GSM-Symbolic Cliff

A grade-school math problem. Three frontier models. Two toggles that change nothing about the underlying arithmetic. Watch what happens to the accuracy bars.

Oliver picks 44 kiwis on Friday and 58 kiwis on Saturday. On Sunday, he picks double the number he picked on Friday. How many kiwis does Oliver have?

Problem eight: this is the wrong question

Goh and colleagues published an RCT in JAMA Network Open in 2024 showing that physicians using GPT-4 did not outperform GPT-4 alone. The more uncomfortable finding the field keeps re-deriving. Hager and colleagues published similar deflationary evidence on clinical decision-making the same year.

The interesting question in 2026 is no longer "can a frontier model beat a Bond-scored differential." We have benchmarked that question to death, both in the contaminated regime and in the uncontaminated regime, and we know the answer is "yes for the easy cases, unclear for the hard cases, and the easy cases are the ones we already had decision support for." The interesting question is whether putting these models in the workflow improves any patient-relevant outcome, and whether human-AI integration patterns are additive or subtractive. Goh suggests subtractive. Brodeur does not address this question.

By April 2026, o1-preview is several generations stale and the paper itself concedes it has been supplanted by newer models. Table S3 reports inference-cost data on o3, with no o1 data, and the explanatory note says "Analysis with o1 was not performed because the model was deprecated during the study period." The authors had to swap models mid-study because o1 went away under them. If the bar for a Science paper is "frontier model beats vignette rubric," we will publish the same paper every six months until 2030 with progressively newer model names in the abstract.

The bar should be: 30-day missed diagnosis rate, time to correct disposition, downstream resource utilization, mortality, and integration patterns that demonstrably add rather than substitute. The authors close their discussion with "motivating the urgent need for prospective trials," which is the polite way of admitting this is benchmark work.

What this paper would have to do to clear the bar

Held-out vignette set verifiably outside any frontier model's training corpus. If you cannot construct one, run a real contamination probe and report the per-case results. "We acknowledge this limitation" does not count, and a 109-vs-34 pre/post-cutoff comparison does not either.

Concurrent human comparators recruited under the same conditions as the AI evaluation. Historical comparators from prior studies are a convenience the field should stop accepting.

Length-matched AI outputs adjudicated against human outputs of similar length. If the rubric is truly source-blind, prove it survives a length match.

Matched prompts across all model conditions, or report results across multiple prompt strategies for each model. Comparing "reasoning model with reasoning enabled by default" against "non-reasoning model with reasoning suppressed by prompt instruction" is not a comparison of architectures.

Document the instructions, time constraints, reference materials, and task framing given to all human comparators with at least the same fidelity as the prompts given to the AI conditions. "The same data were provided" is not a task definition.

Equal-n sampling for all AI conditions. If physicians are sampled hundreds of times and o1 is sampled once, the comparison is a category error.

Cohen's kappa with prevalence and bias adjustment for any task with imbalanced response categories, computed and reported in the main text. Raw agreement is not a substitute. A weighted statistic on nominal data is not a substitute either.

A genuine triage-decision experiment if you want to make triage claims. Acuity assignment, disposition, time to intervention. Bond scores at a timepoint labeled "triage" do not get you there. And report the comparison against the better human, not the pool.

At least one out-of-distribution stress test. Demographic shift, presentation atypicality, location-conditioned guideline accuracy. Something that surfaces what the model does when it is not on rails.

Pre-registered protocol with sample-size justification. The MDAR checklist for this paper marks pre-registration, sample-size determination, randomization, blinding, and inclusion/exclusion criteria as "n/a." Some of those n/a entries are partly defensible because MDAR is designed for life science studies. Marking blinding as n/a when the paper has a formal blinding procedure described in the methods is not.

Patient-relevant outcomes if you want to make deployment claims. If the paper is a benchmark paper, call it a benchmark paper and stop calling it second-opinion validation.

The paper as published clears one of these. I will let you guess which.

What is next

This series will run roughly monthly. Upcoming installments I am committing to drafting in the order I find them most worth writing:

- The Goh RCT and the integration question. Why physicians plus GPT-4 did not beat GPT-4 alone, what that means for second-opinion product narratives, and why nobody wants to talk about it.

- Hager et al. on clinical decision-making and what "clinical decision-making" actually means in benchmark context.

- Epic's Agent Factory positioning. A database platform play dressed up as a clinical AI play, and why the EHR vendors are unlikely to dominate this layer.

- Van Kessel et al. and the location-conditioning problem. The full version of the OOD argument I gestured at here.

- Omar et al. on sociodemographic bias in LLM medical decision-making. The companion piece to van Kessel.

- A retrospective on the Epic sepsis model failure as a case study in how clinical AI marketing gets reality-checked at deployment.

These are all open access or arXiv-available. I will link primary sources in each post and avoid press-release framing where I can.

The series mission is simple. Read the paper. Skip the press release. Hold the field to deployment-grade standards. Be specific about which assumption is load-bearing and where it breaks. The clinical AI literature is in a phase where the methodological vulnerabilities are getting easier to spot and the publicity machinery is getting better at hiding them. Both of those trends require an actively skeptical reader to balance. That is what this is.

Until next month.

Footnotes

Goh E, et al. Large language model influence on diagnostic reasoning: A randomized clinical trial. JAMA Network Open. 2024.

Hager P, et al. Evaluation and mitigation of the limitations of large language models in clinical decision-making. Nature Medicine. 2024.